Artificial Intelligence

AI more human to elders – and that’s bad news

According to a new study, older adults perceive artificial intelligence as more human-like than younger adults do – and fall for it more easily.

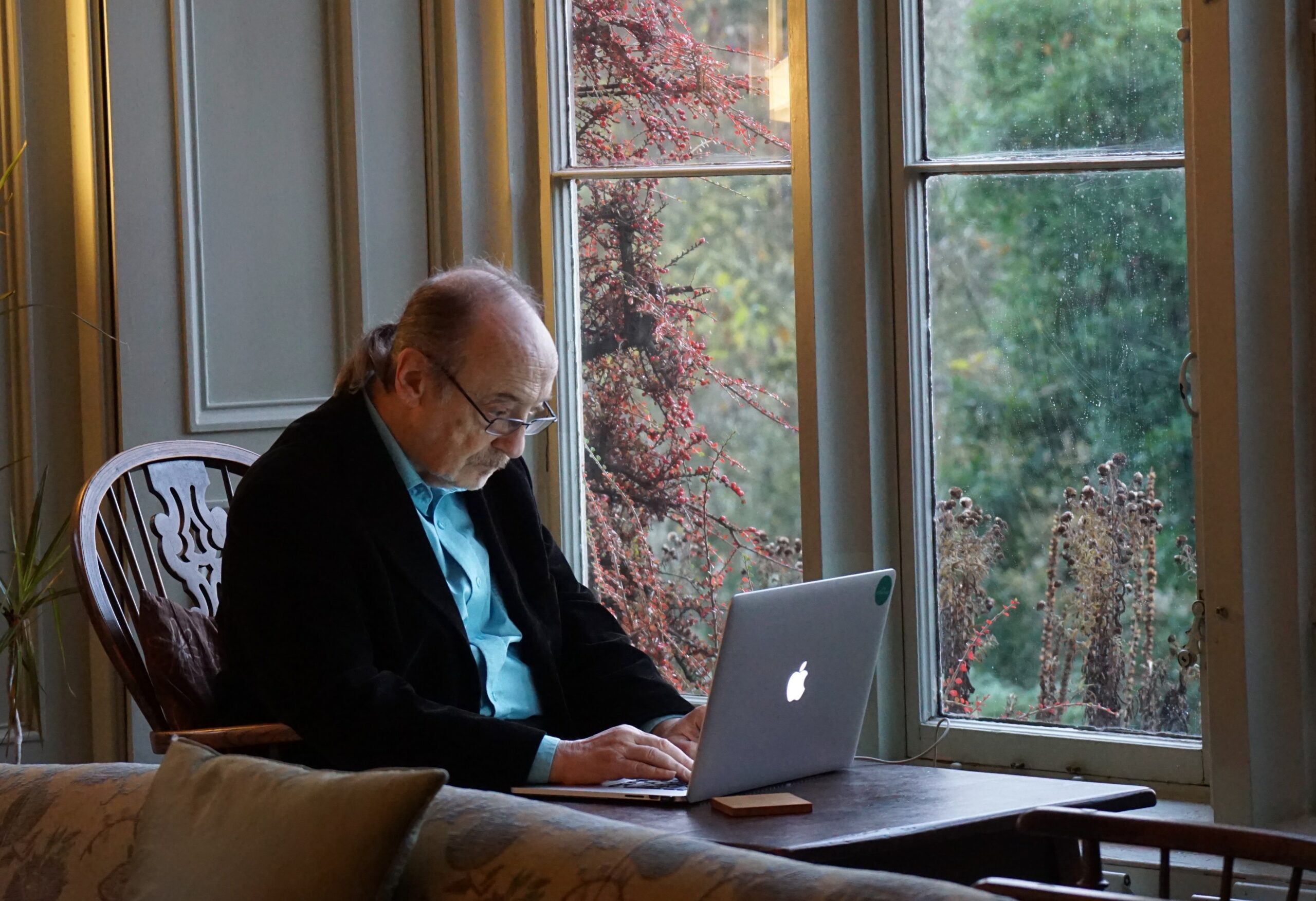

Artificial intelligence (AI) is increasingly present in all of our lives, from newer offerings like ChatGPT to more established voice systems such as automated phone services, self-checkouts, Apple’s Siri and Amazon’s Alexa. While these technologies largely benefit us, they can also be used in adverse ways – for instance, in fraudulent or scam calls – making it important for us to be able to identify them.

According to a recent Baycrest study, older adults appear to be less able to distinguish between computer-generated (AI) speech and human speech compared to their younger counterparts.

“Findings from this study on computer-generated AI speech suggest that older adults may be at a higher risk of being taken advantage of,” says Dr. Björn Herrmann, Baycrest’s Canada Research Chair in Auditory Aging, Scientist at Baycrest’s Rotman Research Institute and lead author of this study. “While this area of research is still in its infancy, further findings could lead to the development of training programs for older adults to help them navigate these challenges.”

In this study, which was the first to examine AI speech recognition in older adults, younger (~30 years) and older (~60 years) adults listened to sentences spoken by 10 different human speakers (five male, five female) and sentences created using 10 AI voices (5 male, 5 female). In one experiment, participants were asked how natural they found the human and AI voices to be. In another, they were asked to identify whether a sentence was spoken by a human or by an AI voice.

Results showed that compared to younger adults, older adults found AI speech more natural and were less able to correctly identify when speech was generated by a computer.

The reasons for this remain unclear and are the subject of follow-up research by Dr. Herrmann and his team. While they have ruled out hearing loss and familiarity with AI technology as factors, it could be related to older adults’ diminished ability to recognise different emotions in speech.

“As we get older, we seem to pay more attention to the actual words in speech than to its rhythm and intonation when trying to get information about the emotions being communicated,” says Dr. Herrmann. “It could be that recognition of AI speech relies on the processing of rhythm and intonation rather than words, which could in turn explain older adults’ reduced ability to identify AI speech.”

In addition to helping develop AI-related training programs, the results of this and future studies could help inform interactive AI technology for older adults. This kind of technology often relies on AI speech and has increasing applicability in medical, long-term care and other support spaces for older adults. For example, therapeutic AI robots can be used to comfort and calm individuals experiencing agitation due to dementia.

By better understanding how older adults perceive AI speech, we can ensure that AI technologies effectively meet their needs, ultimately improving their quality of life and helping them lead a life of purpose, inspiration and fulfilment.

This study was supported by the Canada Research Chairs program and the Natural Sciences and Engineering Research Council of Canada.